“A Lie Can Travel Halfway Around the World While the Truth Is Putting On Its Shoes”

Last Saturday saw a protest in London against the COVID-19 lock down. Its architects were global warming denialist Piers Corbyn and David Icke, a man who alleges that the British Royal Family are actually giant lizards. Cue a deep dive into COVID-10 conspiracy theories. No one can agree which conspiracy is the correct one but there definitely is one. Wake up sheeple!

The above tweet from Joe Politics showing anti-lock down protesters caught my attention as it nicely displays the general themes these lies have taken: the virus isn’t real, it’s being used as an excuse by governments and that 5G is behind the whole thing. I thought I’d go through each of the claims made by the protesters in the video and look to see if the science backs them up. In summary it doesn’t. For more information, read on.

“I believe that the virus is real, but it’s not as bad as they are saying it is”

“The mortality rate for this is less than the ‘flu. It’s been proven”

I’ve grouped these two claims together as they are on a similar theme and echo sentiments made by people in power such as Brazilian President Jair Bolsonaro. Is COVID-19 as bad as is made out?

It depends on which ‘flu you mean. Influenza is a disease which has been with mankind for a long time. Hippocrates the Ancient Greek physician described what sounds like ‘flu over 2000 years ago. It is caused by a virus of which there are three kinds: A, B and C. The most common is Influenza A. Influenza A viruses contain two proteins: haemagglutinin and neuraminidase which are abbreviated to ‘H’ and ‘N’. Strains of influenza A are distinguished by the type of these proteins, or antigen, they express on their outer surface; H1N1 being one strain, H2N2 being another and so on.

There’s been a lot of talk of the influenza pandemic of 1918-1919; often called ‘Spanish ‘flu’. This was caused by H1N1 Influenza A. It’s estimated that it infected 500 million people worldwide, killing 50 million. That’s a mortality rate of 10%.

By comparison as of 1st September 2020 there have been 25,694,471 confirmed cases of COVID-19, including 855,962 deaths, reported to the World Health Organisation (WHO). That’s a mortality rate of 3.33%. So he’s right, COVID-19 has a mortality rate about a third of Spanish ‘flu.

But hang on. Because we have lived so long with influenza we have entered into an ‘arms race’: the virus mutates, we develop immunity, the virus becomes less deadly until a new mutation and so on. This is the difference between seasonal ‘flu we experience every year and pandemic ‘flu.

Since 1919 we have also developed influenza vaccines not to mention we’ve made vast improvements in public health. The H1N1 virus was also behind the swine ‘flu pandemic of 2009-2010. Over 80 years since the Spanish ‘flu. Same virus, 80 years of medical improvements later. The WHO reported 491,382 confirmed cases and 18,449 deaths. That’s a mortality rate of 3.75%. Only slightly more than COVID-19.

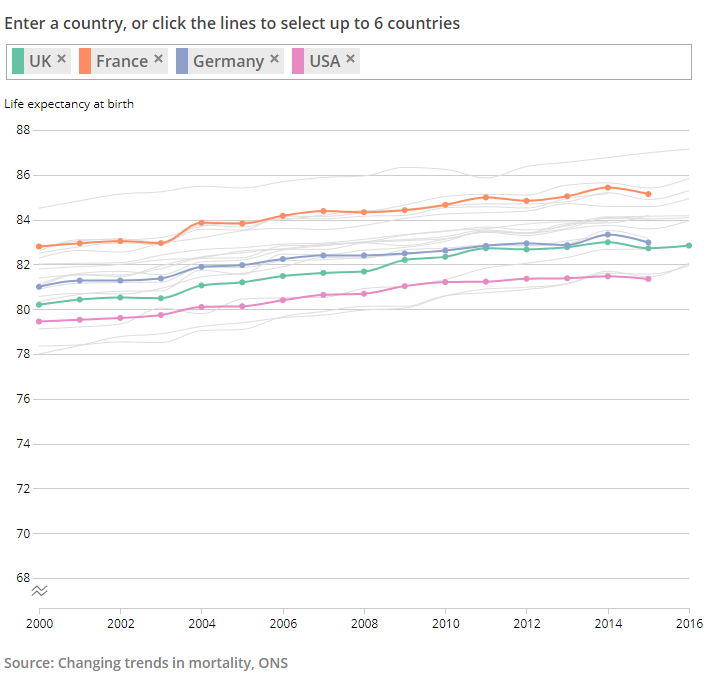

What about a different Influenza A virus? The Asian ‘flu pandemic (H2N2) of 1957 to 1958 had a mortality rate of 0.3% in the UK. Much less than the 12.4% mortality we’ve seen with COVID-19 in the UK at the time of writing.

Data for the 2019-2020 influenza season is still being collected and, understandably, research has been superseded by the COVID-19 pandemic. When saying one disease is ‘worse’ than another it’s important to try and limit differences as much as possible. Here in the UK we have a much more sophisticated healthcare infrastructure than a lot of the world with some diseases which cause huge numbers of deaths worldwide such as malaria non-existent here.

To that end let’s look at numbers as focused and comparable as possible.

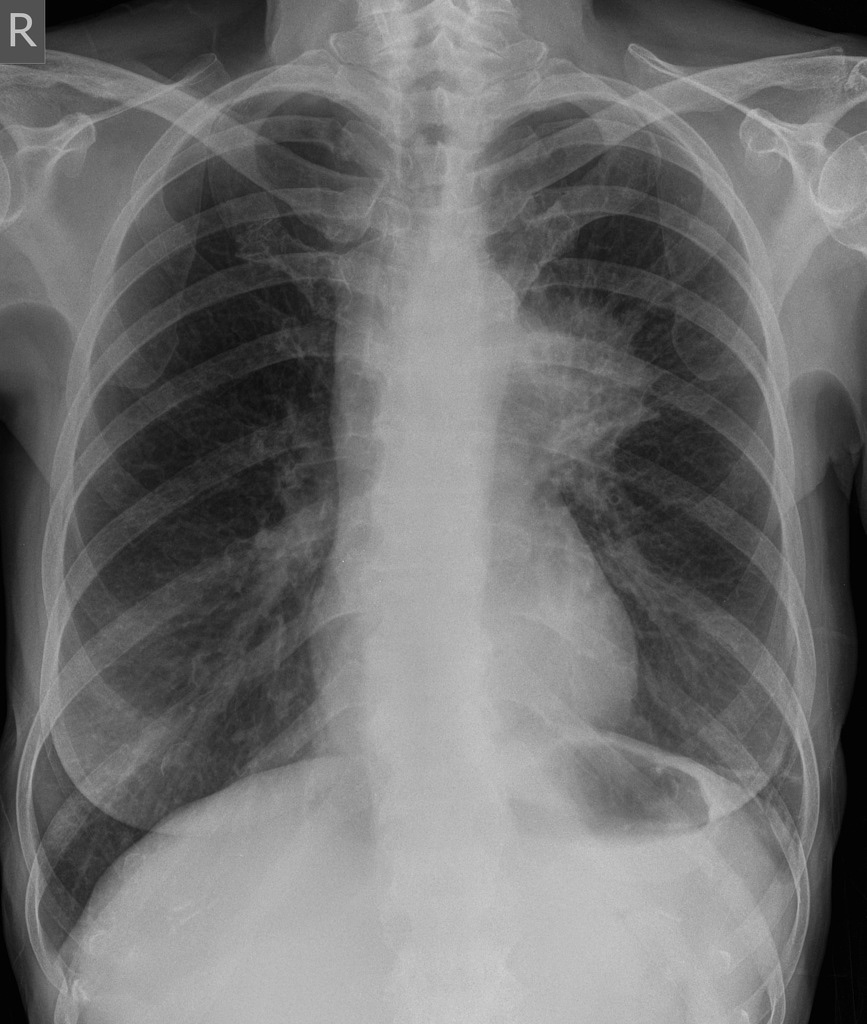

On 3rd June 2020 the Scottish Intensive Care Society Audit Group published a report on COVID-19. Between 1 March 2020 to 16 May 2020 there were 504 patients with confirmed COVID-19 admitted to intensive care units in Scotland. Of these patients, 38% died.

Looking at data available from the 2018-2019 influenza season tells us that from October 1st 2018 to April 8th 2019 only 166 patients with influenza were admitted to an intensive care unit in Scotland. Of these patients, 19% died.

In other words, in just 12 weeks over three times the number of patients in Scotland were admitted to intensive care with COVID-19 than those admitted over 27 weeks with influenza. The mortality rate for COVID-19 was double that of influenza.

Three times the number of patients. Double the mortality rate. In less than half the amount of time.

The CDC in the USA has recorded 34,157 deaths due to influenza in the 2018-19 ‘flu season. This represents 10.4 deaths per 100,000 people in the USA. As of 11th June 2020, the World Health Organisation has recorded 111,978 deaths in people in the US who tested positive for COVID-19. This represents 32 deaths per 100,000.

This disease is bad. It has a lower mortality rate than the worst influenza pandemic ever but that is hardly a comfort. Spanish ‘flu’s mortality rate is dwarfed by that of the Black Death where it’s estimated a third of the population of Europe died. Does this make deaths due to Spanish ‘flu inconsequential? Of course not. Once again. This disease is bad. It’s much worse than seasonal ‘flu and even some pandemic ‘flu.

“The test that they use, it has an 80% false positive. This virus has never been proven to exist”

Let’s start with the bit about the virus not existing.

Viruses are bizarre. First, there’s what they actually are. They are just a collection of genetic material wrapped in an envelope of proteins and sugars. That’s it. Then there’s their size. They are tiny. You could fit 100,000 of even the largest species of viruses on a full stop. As a result whilst the existence of an infective agent smaller than bacteria was hypothesised in the 19th century it wasn’t until 1931 when we could actually see them. This was due to the invention of the electron microscope which used beams of electrons rather than light. Then there’s how they multiply. By themselves, viruses appear inert. It is only when they infect a host they are able to multiply. They injecting their genetic material into the host’s cells which hijacks their normal functioning. The cell instead starts producing more virus until it ‘explodes’ releasing the new virus which can infect more cells and so on.

The severe acute respiratory syndrome coronavirus 2 (SARS-CoV-2) virus behind COVID-19 is a member of the coronavirus ‘family’ of viruses. Coronaviruses were first identified in chickens in the 1930s and in humans in the 1960s. Their name comes from the Latin for crown because when viewed down an electron microscope spiky proteins on their surface looks like the points of a crown. They are common and have been found in a variety of animals from bats to camels. It’s estimated that about a quarter of all cases of the common cold are caused by coronaviruses. Their pandemic potential has also been proven. The Severe Acute Respiratory Syndrome (SARS) pandemic of 2003 (8096 cases, 774 deaths) and Middle Eastern Respiratory Syndrome (MERS) pandemic of 2012 (2494 cases, 858 deaths) were both caused by a coronavirus.

The first cases of COVID-19 were identified in an unusual pneumonia in China in December 2019. Within a month SARS-CoV-2 had been genetically sequenced. In February the first electron microscopy pictures of the virus were released. We’re tracking its mutation rate. We have modelled the structure of the virus’s membrane and the receptors in humans it targets and replicated these in mice.

So to clarify: this virus comes from a family we know a lot about and which has already been shown to cause pandemics. Within a couple of months of becoming aware of it, we have been able to sequence its genetic material and even take photographs of it. We know how it targets us and we’re tracking its evolution. It exists. It has been proven to exist.

What this illustrates is a fact about conspiracy theories and theorists. Whilst one of the interviewed protesters was happy to admit the virus is real this gentleman claimed it hasn’t been proven to exist. If there’s a conspiracy what is it? You see this with claims about JFK, the moon landings or vaccines. Everyone has their own ideas: it was the mafia, it was the CIA etc. Surely if there is a conspiracy only one of them can be right? But which one? Maybe the reason there are so many conspiracy theories is that they’re all wrong whereas people following an actual scientific method tend to arrive at the same conclusions: Lee Harvey-Oswald killed JFK, the moon landings happened, vaccines are safe and COVID-19 exists. That’s not a conspiracy. It’s just the reason why we have a scientific method in the first place.

Now, what about this claim about 80% false positives?

No test is perfect and there are ways of being able to assess any test used in Medicine. One is the ability of a test to detect disease in someone who has it. This is sensitivity. Another is a test’s ability to rule out disease in people who don’t have it. This is specificity.

This chap is alleging that the test for COVID-19 has a false positive rate of 80%. He’s claiming that 80% of positive results for COVID-19 are wrong; that people without COVID-19 are testing as positive. Therefore he is alleging that the test for COVID-19 is not specific enough; it is failing to rule out the absence of disease in 80% of cases. Never mind the fact that if the virus didn’t exist the false positive rate would actually be 100%, let’s explore that figure of 80%. That doesn’t sound right. That’s because it isn’t.

Firstly it’s important to look for a disease in the right place. The Ebola virus, for example, is spread by direct contact with body fluids. The blood of a patient with Ebola as well as other fluids such as vomit is therefore full of the virus. COVID-19, by comparison, is spread by coughing up droplets containing the virus and so it sticks to the respiratory system.

A study in China looked at different samples taken from patients known to have COVID-19. In all, they looked at over one thousand samples taken from over two hundred patients. Only 1% of the blood samples taken showed the virus. By contrast, the best sample for detecting the virus was bronchoalveolar lavage: a test performed by shooting water in the deep airways of a patient and then collecting it to see what the washing picks up. 93% of these samples found the virus. But this is a test only performed on sedated patients; it can’t be used in mass testing. The study also looked at swabs taken from the nose and throat (the tests we use most commonly) and found they detected the virus in 63% and 32% of patients respectively. This suggested a false negative rate of 37% for nasal swabs and 68% for throat swabs. According to Dr Jessica Watson; a GP studying the quality of diagnostic tests, who appeared on a recent episode of the BBC Radio 4 show More or Less, the nose and throat swabs being used in the UK currently pick up the virus in about 70% of patients showing symptoms.

This all indicates that the problem is with false negatives rather than false positives; with sensitivity (finding the disease in people who have it), not specificity (ruling it out in people who don’t). This makes sense. The test for the virus looks for its genetic material. In order to detect it, we run a polymerase chain reaction (PCR) test which takes a small sample of genetic material and makes more of it in order so it can be analysed. This is the same principle used in forensics. If a swab is taken too early in the infection or too late there may not be enough virus around to detect. If the person taking the swab doesn’t use the right technique it won’t pick up enough or any virus to analyse in the first place. That’s before we even get onto the UK’s policy toward testing and the phenomenon of asymptomatic patients.

Rather than a false positive rate of 80%, there is, in fact, a false negative rate of about 30%. Far from over-diagnosis the problem, in fact, is with under-diagnosis. In both counts this man is wrong.

“If you look up radiation poisoning, the effects of that and the effects of COVID-19 fit together like a glove”

Ah, the 5G conspiracy. 100 5G masts have been set on fire in the UK due to the belief that the wireless network either created the COVID-19 virus or has weakened our immune system to the point that we’re more susceptible to the virus. Of course, this is nonsense. Let’s look at why.

5G is so-called because it is the fifth generation network being used by mobile phones. The idea being it uses radio waves of a higher frequency (the number of waves in a given period of time) than previously used so a greater amount of information can be transmitted. Waves at a greater frequency can’t travel as far so more towers are needed to transmit. Radio waves are a form of electromagnetic radiation. Uh-oh, radiation. That’s bad, right? Not necessarily. All forms of electromagnetic radiation exist on a spectrum, the electromagnetic spectrum, with waves of shorter frequency on one end and those of higher frequency on the other. Those waves of a higher frequency are called ‘ionising radiation’; the waves are able to interfere with the structure of atoms. It is ionising radiation which can damage DNA. Lower frequency, or non-ionising radiation, can not do this as the waves don’t carry sufficient energy. You’ll note that even though 5G uses higher frequency radio waves their frequency is still so low they sit comfortably on the non-ionising side of the spectrum. You’ll also see that as well as nuclear radiation on the ionising side there is also visible light. This is why sunburn is so serious as light waves have the ability to damage skin and mutate DNA to cause cancer. But of course, no-one protests against the Sun. Or light bulbs.

But let’s for one moment break the rules of physics and pretend that 5G is ionising radiation. Luckily there’s a resource for healthcare workers called Toxbase which gives advice on all things toxicological. For patients recently exposed to ionising radiation it gives these symptoms:

“Nausea, vomiting, anorexia, mild pyrexia, erythema and diarrhoea developing hours to days following exposure. Earlier onset of clinical features indicates higher absorbed dose. Conjunctivitis may occur if the eyes have been exposed”.

The WHO gives this information on the symptoms of COVID-19:

“The most common symptoms of COVID-19 are fever, dry cough, and tiredness. Other symptoms that are less common and may affect some patients include aches and pains, nasal congestion, headache, conjunctivitis, sore throat, diarrhea, loss of taste or smell or a rash on skin or discoloration of fingers or toes. These symptoms are usually mild and begin gradually. Some people become infected but only have very mild symptoms.”

Hardly fitting like a glove.

Let’s now listen to the laws of physics and look up what non-ionising radiation (what 5G actually is) can do to you:

“Skin burns, which may be more penetrating than burns from a thermal source.

No consistently replicable effects have been found from exposures at levels below those that produce detectable heating, in particular there is no convincing evidence of common symptoms (e.g. headaches), genetic damage or increased likelihood of cells becoming malignant due to acute or chronic radio frequency exposures (PHE)”.

Again not even fitting like a glove (you’ll have to imagine me doing that thing he did with his hands).

There are only so many symptoms a disease can cause. Just because different disease processes have similar symptoms it doesn’t mean those diseases are the same. High blood pressure and brain tumours are two very different things yet both can cause headaches. The art of Medicine is in exploring a symptom with history and investigations to find the cause. Not just reading a list online.

This is not the only myth about 5G. Another is that 5G weakens our immune system. Another is that somehow it caused this virus. Both are biological impossibilities. The scruples of people peddling these lies can be shown by the peddling of the £339 ‘5GBioShield’ which claimed to provide “protection for your home and family, thanks to the wearable holographic nano-layer catalyser.” It was found to just be a normal USB stick.

I can not emphasise this enough: There is no evidence that 5G is bad for you. It does not cause COVID-19.

“You don’t lock the world up for a virus that has a mortality rate of less than 1%”

See above. Its worldwide mortality rate is currently 3.33%. That’s more than 1%. Not less.

But anyway, why did we lock the world up? The reason is not just with the number of people who might die but also the burden placed on healthcare systems. One way of looking at how infectious a disease is by measuring its basic reproduction number called R nought (R0).

One study in Wuhan, China found that COVID-19 had an R0 of 3. This means that every patient infected another 3 people and so on. That doesn’t sound much but in just 13 steps:

1 -> 3 -> 9 -> 27 -> 81 -> 243 -> 729 -> 2187 -> 6561 -> 19683 -> 59049 -> 177147 -> 531441 -> 1594323

One case could become more than 1.5 million. As the disease emerged it became apparent that 5% of patients infected developed critical illness. That would be 75,000 patients needing critical care. In the most recent data available before the COVID-19 lock down there were 4,122 intensive care beds in England. 1.5 millions patients, 75,000 of whom needing a critical care bed would easily overwhelm our health service.

This is the reason behind lock down, behind ‘stay home, protect the NHS, save lives’ and flattening the curve. Through lock down measures in Wuhan the study found that the R0 was reduced from 3 to 0.3 This is the crucial step of beating an infectious disease; reaching the point where each patient is infecting fewer than one other person. This makes the difference between millions of patients and beating the virus. In the past week, Professor Neil Ferguson a former senior member of the Scientific Advisory Group for Emergencies (SAGE) has claimed that the UK death toll could have been halved if lock down had been started a week earlier. A recent systematic review and meta-analysis (the highest level of scientific evidence) of the literature in the Lancet strongly supports the use of physical distancing measures in reducing the risk of infection.

For what it is worth measles does have a mortality rate of less than 1% at 0.2% although the disease carries a risk of serious complications. Despite its low mortality measles is even more infectious than COVID-19 with an R0 of somewhere between 12 and 18. Last year during an outbreak of measles in New York there was a public health emergency slightly prescient of the lock down declared. Non-vaccinated children were not allowed in public spaces, legislation allowing vaccine exemption was repealed and some pre-schools were closed. And that’s for a disease where there is a vaccine. So she is wrong. Both on the mortality rate of COVID-19 and on the lock down.

“I think we will be living in a far worse, dystopian version of Nazi Germany”

The Nazis were responsible for the systematic discrimination, persecution and murder of people based on a deranged idea of eugenics. They sent people to work as slaves and die purely because they didn’t fit the perceived Nazi ideal. They sought out the most vulnerable: the disabled and the young and made them their own plaything to experiment on. They murdered six million Jewish men, women and children. I am genuinely staggered how anyone could think a “far worse, dystopian” version of this possible much less make a casual prediction that a lock down designed to prevent a virus is going to bring it about. I can’t really say anything else whilst being polite.

So there we have it. Five conspiracy theories which don’t stand up to scientific scrutiny. I don’t expect this will change minds of the converted, but maybe, as with vaccination we can ring around ‘outbreaks’ of conspiracy and prevent it being spread further. Misinformation drives public health risks such as vaccine hesitancy.It’s not easy. I’ve tweeted about the lies of anti-vaxxers and been called everything from a clown to a stooge of big Pharma by people who feel their meme corresponds to a medical degree. But as US Senator Daniel Patrick Moynihan said, “You are entitled to your opinion. But you are not entitled to your own facts”.